Meta's $21 Billion Deal Secures Deployment Speed, Not Just GPU Access

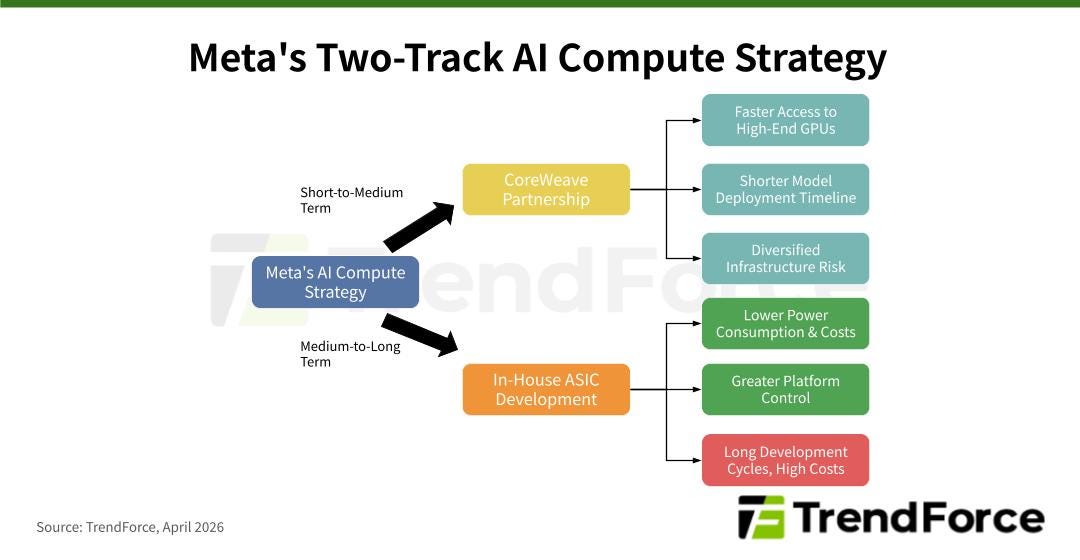

The CoreWeave deal underscores that ready-to-deploy compute remains more immediately viable than in-house build-out and in-house silicon in the short-to-medium term.

On April 9, 2026, Meta announced an additional US$21 billion procurement of compute power from AI cloud infrastructure provider CoreWeave. The agreement extends their partnership through 2032, with a portion of the capacity to run on NVIDIA’s Vera Rubin platform. The transaction signals that, in the frontier AI race, Meta’s strategy now hinges above all on how quickly it can secure deployment-ready, high-end GPU capacity.

Meta Prioritizes Operational Readiness

Meta’s additional US$21 billion commitment to CoreWeave is driven by more than just compute capacity constraints; it is a strategic move to secure production-ready infrastructure that can immediately support inference and model serving. According to disclosures from both parties, this dedicated capacity will be deployed across multiple locations and will include some of the initial deployments of NVIDIA’s Vera Rubin platform, enhancing the performance, resilience, and scalability of Meta’s AI operations.

CoreWeave also noted that the core of this collaboration is to support Meta’s large-scale AI inference requirements. Meta is essentially procuring a comprehensive suite of cloud delivery capabilities optimized for AI workloads—comprising high-performance GPU clusters, networking, storage, and rapidly deployable infrastructure.

The expected advantages for Meta are threefold:

Rapidly closing compute gaps for training, inference, and product deployment as frontier model competition intensifies;

Securing early access to next-generation Rubin platform resources, avoiding the lead times of in-house data center build-out and equipment procurement;

Externalizing part of the infrastructure build-out and delivery risk, allowing Meta to concentrate resources on model R&D and product execution.

In-House ASIC Development Continues; Training Chips Are the Primary Bottleneck

Meta has not abandoned its in-house ASIC development. In March 2026, the company unveiled a roadmap for the MTIA 300, 400, 450, and 500 series. The MTIA 300 is already integrated into ranking and recommendation systems, with subsequent generations set for phased rollouts. This indicates that Meta still views in-house ASICs as a critical mid-to-long-term strategy for reducing power consumption, lowering costs, and increasing platform control.

The primary bottleneck for Meta remains the development speed and maturity of its training chips for generative AI. Compared to inference chips, training chips are considerably more challenging in terms of architectural design, system integration, and mass-production validation.

Notably, chip development from tape-out to mass production is inherently expensive, time-consuming, and exposed to failure risk. Meta itself canceled one in-house chip program after small-scale testing, subsequently ramped up NVIDIA GPU procurement, and has reportedly leased Google TPUs to support model development. This underscores a key reality: ASICs can offer superior power and cost efficiency for specific, stable, and optimizable workloads. However, when applied to frontier model training and general-purpose generative AI compute, they still face significant challenges across architectural design, hardware-software co-optimization, manufacturing yields, development cycles, and ecosystem maturity.

Meta’s case illustrates that in the frontier AI race, merchant GPUs and cloud compute remain the most viable short-to-medium-term solutions, while in-house ASICs serve as a parallel, longer-term path for reducing costs and reinforcing platform control.