The Great Rebalance: How Agentic AI Is Reshaping the CPU:GPU Ratio

Agentic AI is driving a structural shift in CPU:GPU ratios — and triggering a supply crunch that has Intel and AMD raising prices.

In March 2026, two announcements arrived in quick succession: Nvidia began selling its Vera CPU as a standalone product, and Arm unveiled its first in-house CPU, the Arm AGI CPU. That a GPU company and an IP licensing firm both chose to enter the CPU market in the same month is not a coincidence. It reflects a structural shift in how AI data centers think about CPU demand.

CPU’s Role in Agentic AI

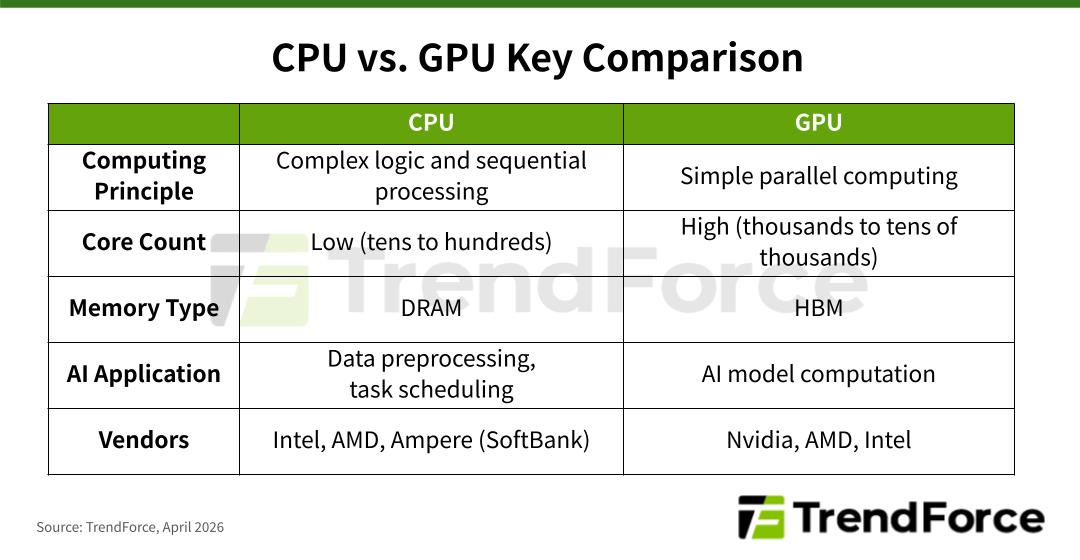

Historically, CPUs have served as the “brains” of data center servers—handling complex logic, sequential processing, and classical machine learning workloads such as linear regression and decision trees.

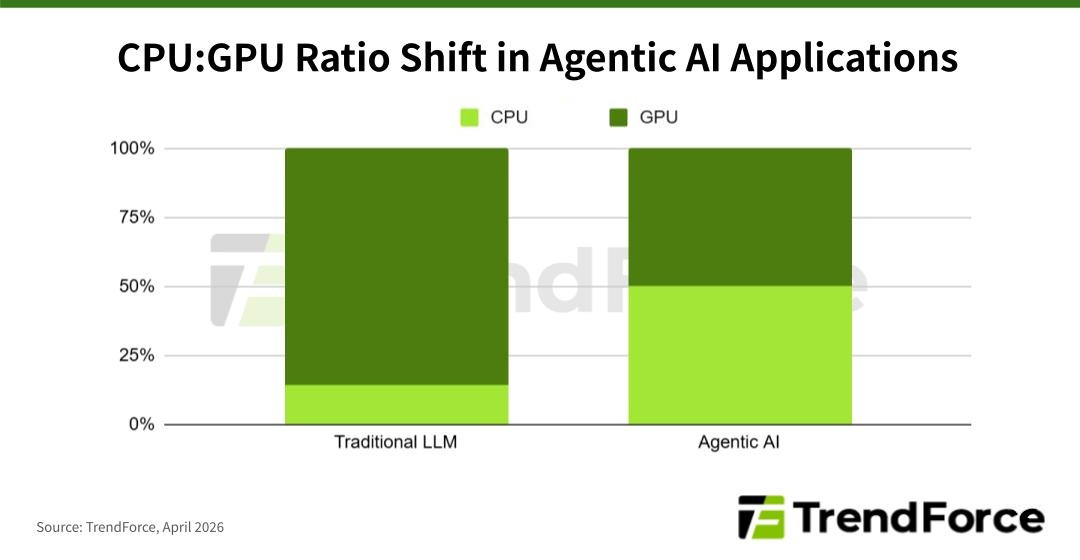

The AI boom changed that dynamic. AI models now require massive parallel matrix multiplication, which GPUs—with their embarrassingly parallel architectures—are built to handle at scale. CPUs were relegated to compressing and routing memory data to GPUs. As a result, today’s AI data centers operate at CPU-to-GPU ratios of roughly 1:4 to 1:8.

Related report: 2026 Agentic AI Wave: CPU Shortage and GPU Ratio Structural Changes

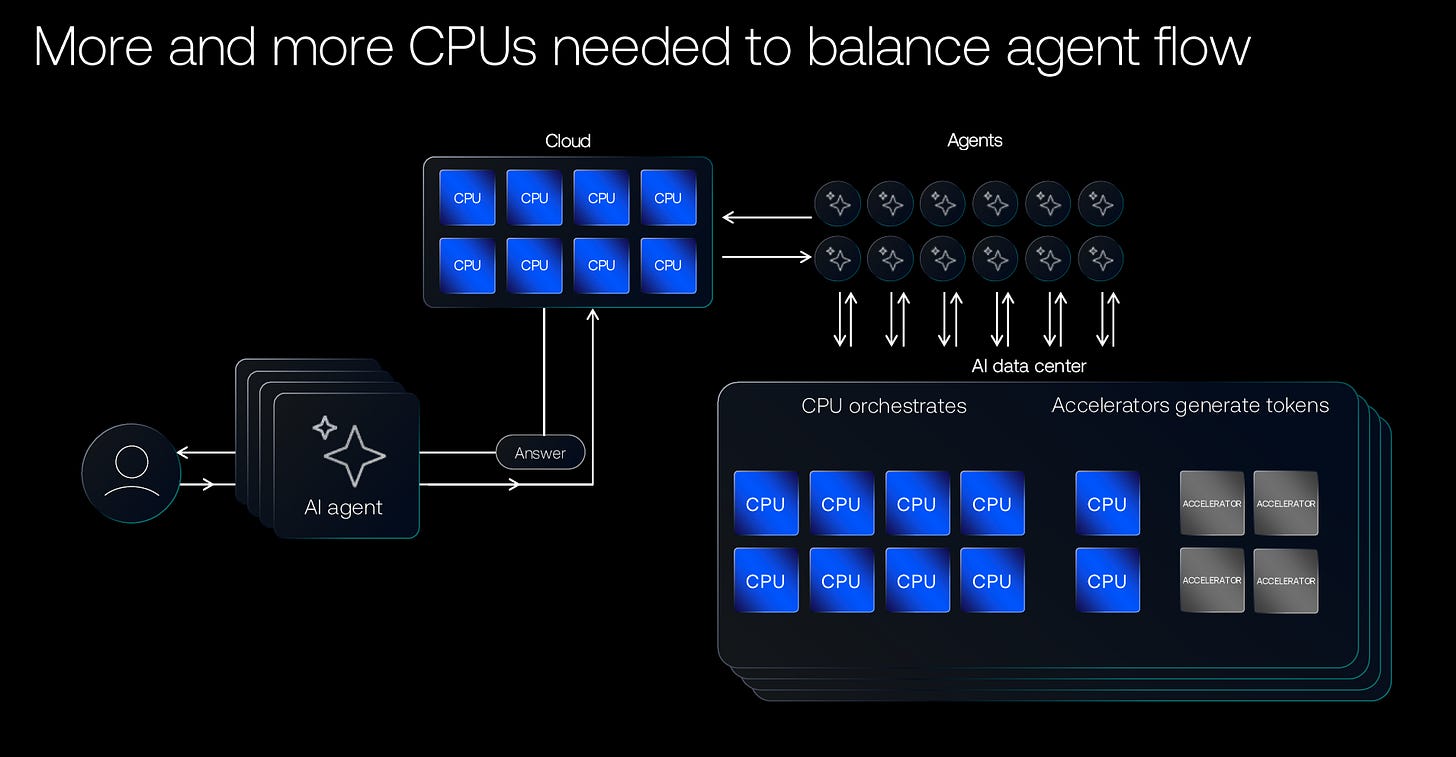

The rise of AI agents is changing that balance. Unlike static LLMs, Agentic AI is designed to interact dynamically with its environment—planning tasks, calling tools, making decisions, and taking actions on behalf of users. The coordination layer that manages all of this—scheduling sub-tasks, routing tool calls, passing data between sub-agents, and evaluating whether the original request has been fulfilled—falls squarely on the CPU. This is what makes Orchestration a CPU-intensive workload.

Agentic RL adds further demand. When AI agents are trained through Reinforcement Learning (RL), each action the agent takes must be evaluated—a process that places additional load on the CPU.

A November 2025 paper, A CPU-Centric Perspective on Agentic AI, noted that CPUs remain crucial for tool processing tasks such as Python interpretation, web crawling, lexical summarization, and database searches—scenarios that remain prevalent in Agentic AI. AI Agents may face three primary bottlenecks regarding CPUs:

Latency: Tool processing on CPUs can account for up to 90.6% of total latency.

Throughput: Bottlenecks can stem from CPU factors (number of cores, coherence and synchronization) or GPU factors (main memory capacity and bandwidth).

Energy: CPU dynamic energy consumption can reach 44% of the total dynamic energy at large batch sizes.

To address these, the traditional CPU:GPU ratio must change. Arm estimates that while traditional AI data centers require about 30 million CPU cores per GW, demand will surge to 120 million CPU cores per GW in the AI Agent era—a fourfold increase. The future CPU-to-GPU ratio is expected to shift to between 1:1 and 1:2, significantly boosting market demand for CPUs.

CPU demand has surged across both AI workloads and general-purpose servers within data centers. Intel and AMD responded by raising prices across select CPU product lines toward the end of 1Q26.

Related report: Trends to Watch 2026: Agentic AI and Real-time Inference

2026 CPU Market Landscape

This demand shift is reshaping the competitive landscape. Beyond traditional server CPU vendors Intel and AMD, a new wave of non-traditional players—GPU maker Nvidia, IP licensor Arm, and major cloud service providers (CSPs) including AWS, Google, and Microsoft—are now entering the server CPU market.

Traditional x86 Vendors: Intel and AMD

Intel’s Xeon processors long commanded over 95% of the data center CPU market. That dominance began to erode in 2021, when yield issues with the Intel 7 process delayed the Xeon Sapphire Rapids launch by nearly two years, creating an opening for AMD’s EPYC Milan—built on TSMC N7 with the Zen3 architecture—to make meaningful inroads.

For 2026, Intel is planning two major launches, both on its most advanced Intel 18A process, reaffirming its commitment to in-house fabrication:

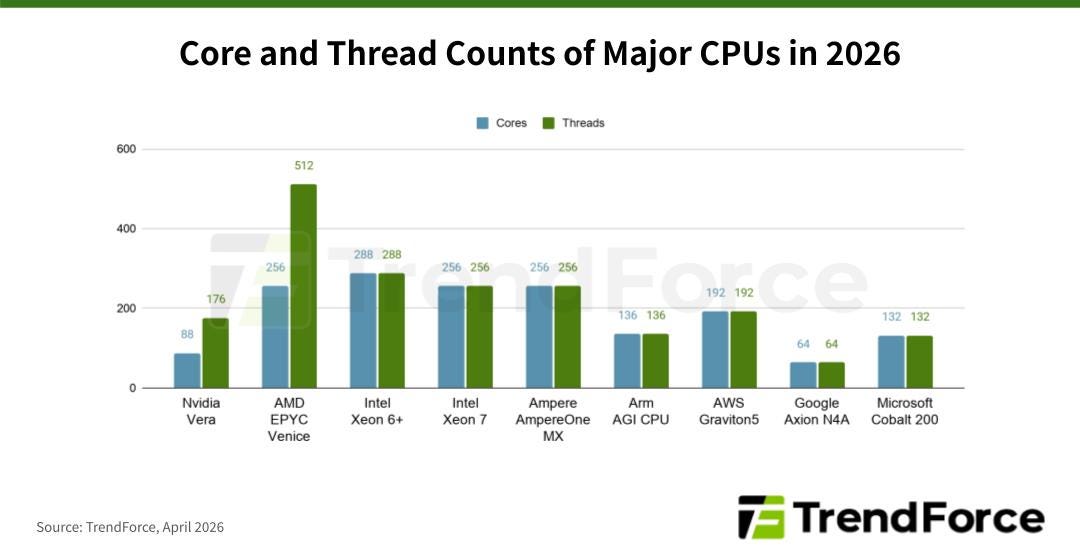

Xeon 6+ (Clearwater Forest): Darkmont architecture, 288/288 cores/threads, TDP ~450W, featuring Foveros Direct Hybrid Bonding for the first time.

Xeon 7 (Diamond Rapids): Panther Cove-X architecture, up to 256/256 cores/threads, TDP up to 650W.

However, due to ongoing yield issues with the 18A process, mass production for both may be delayed until 2027.

Meanwhile, AMD continues its partnership with TSMC. Its EPYC Venice, expected in 2026, will use the Zen 6 architecture, TSMC N2 process, and advanced packaging (CoWoS-L, SoIC), featuring 256/512 cores/threads. AMD is expected to continue gaining market share from Intel in 2026.

Arm-Based Entrants: Nvidia, Arm, Ampere, and the CSPs

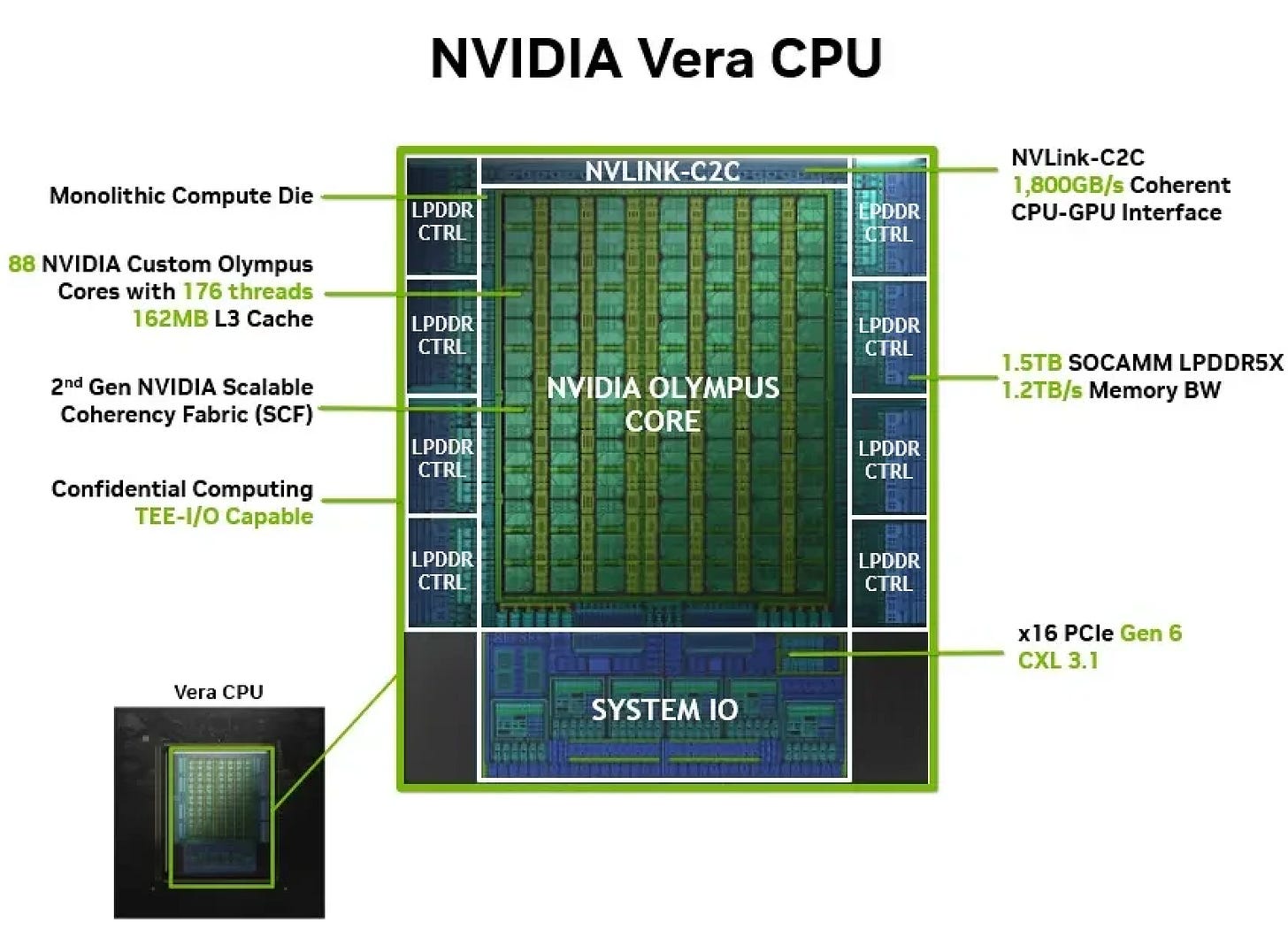

In March 2026, Nvidia announced it would begin selling the Vera CPU as a standalone product, responding to customer demand for more flexible CPU:GPU configurations. Early partners include Alibaba, ByteDance, Cloudflare, CoreWeave, Crusoe, Lambda, Nebius, Nscale, Oracle, Together.AI, and Vultr.

Vera uses Nvidia’s custom Olympus architecture, along with TSMC N3 process and CoWoS-R packaging technology, and features 88 cores/176 threads with Spatial Multithreading. While its core count is lower than Intel or AMD flagships, it offers a 1.8 TB/s NVLink-C2C interconnect for memory sharing with Nvidia GPUs.

Related report: 2026 NVIDIA AI Outlook: From GPU to LPU Racks & Inference

Nvidia also introduced the Vera CPU Rack, built on its MGX architecture. A single rack features 32 × 1U compute trays, each housing 4 Vera CPU Nodes, totaling 256 CPUs (22,528/45,056 cores/threads and 400 TB of memory). These racks are interconnected via BlueField-4 DPUs—which integrate Grace CPUs and ConnectX-9—ensuring high integration within the Nvidia ecosystem.

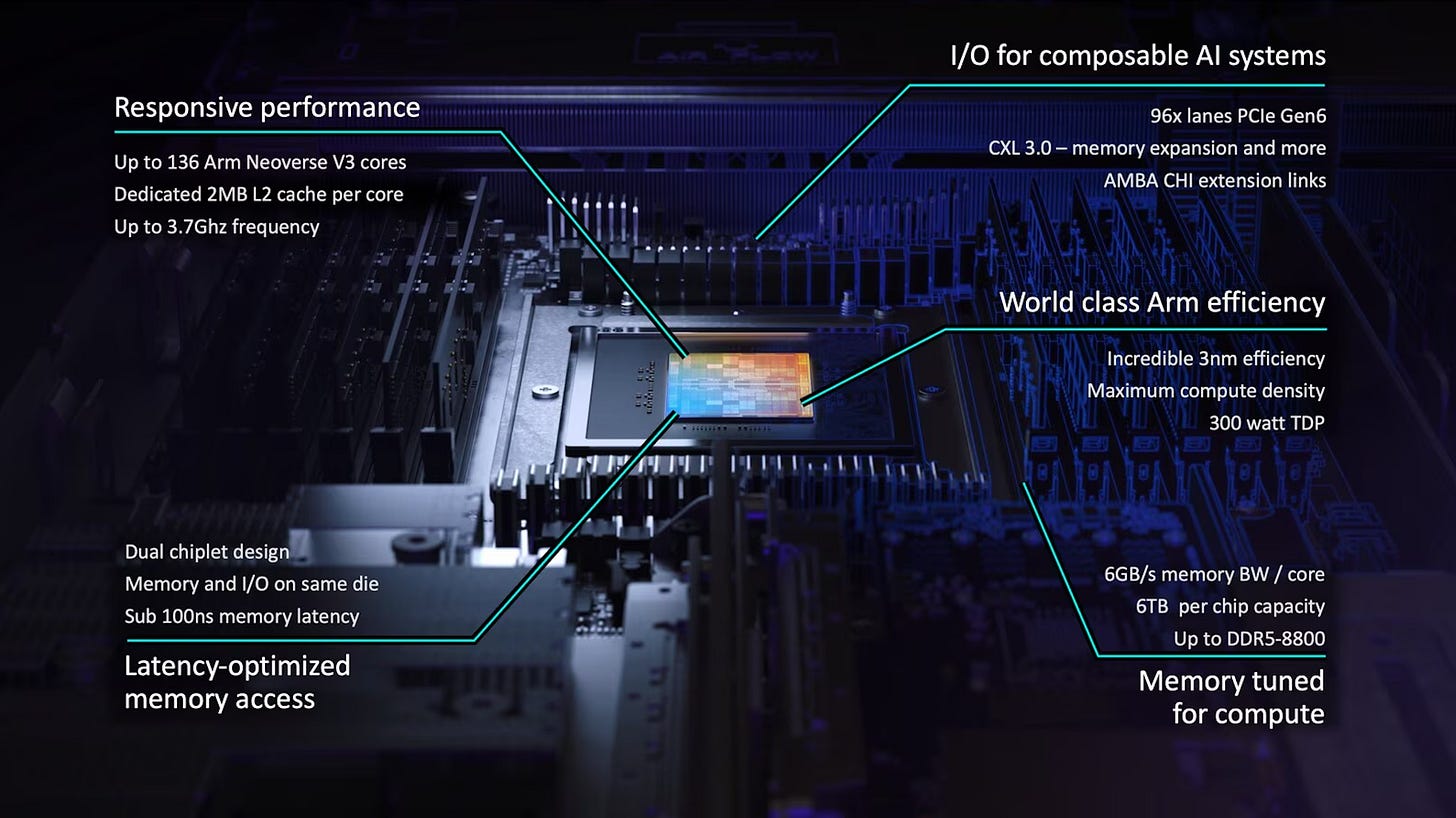

Also in March 2026, Arm took an unprecedented step into the CPU product market with the Arm AGI CPU, ending 35 years of pure licensing. Built on TSMC N3 with the Arm Neoverse V3 architecture, it offers 136/136 cores/threads. Launch partners include Meta, Cerebras, Cloudflare, F5, OpenAI, Positron, Rebellions, SAP, and SK Telecom.

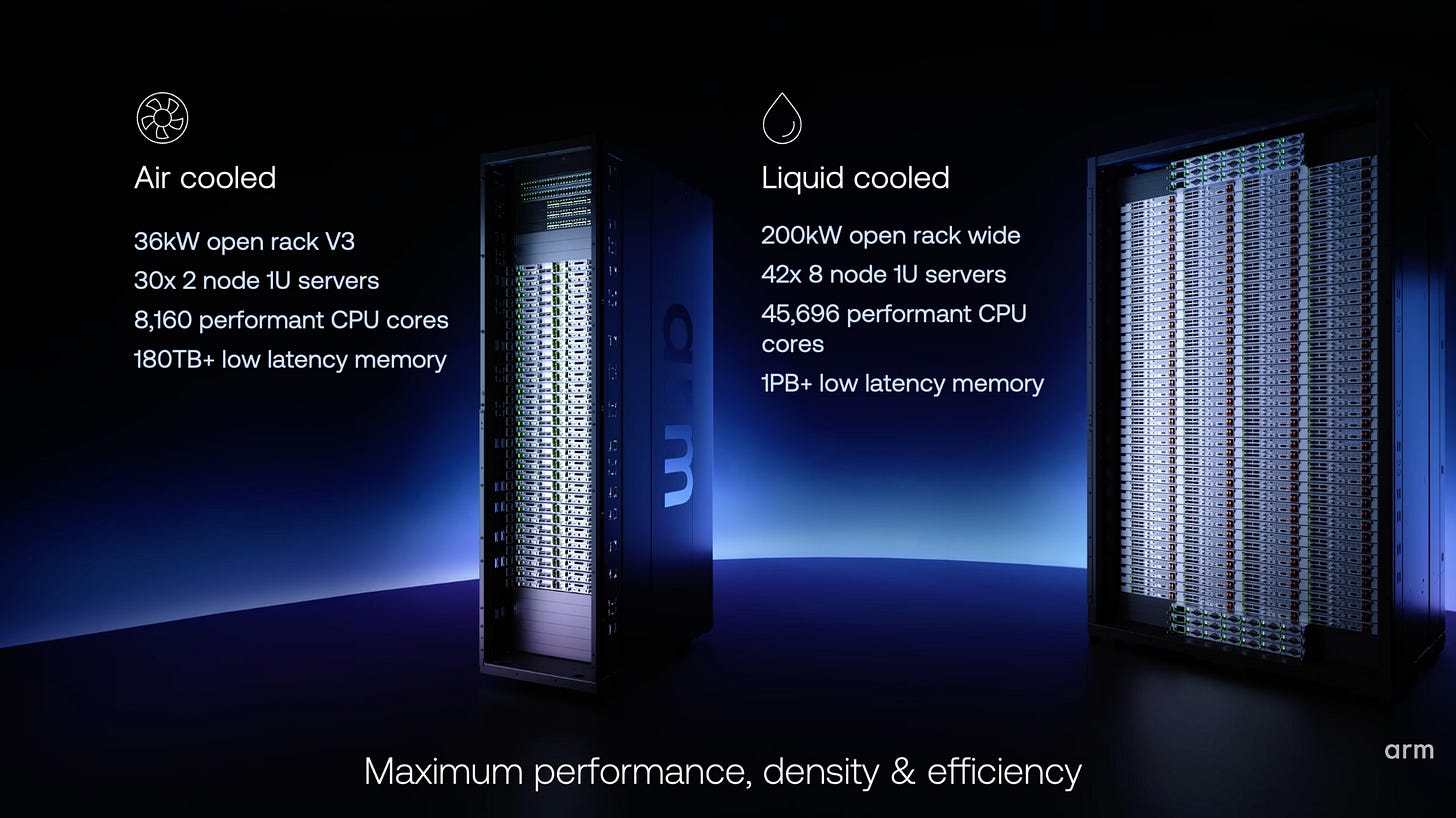

Arm has introduced standalone CPU racks in two configurations: an air-cooled version integrating 60 AGI CPUs (8,160/8,160 cores/threads, ~180 TB memory), and a liquid-cooled version supporting 336 CPUs (45,696/45,696 cores/threads, 1 PB memory).

Related report: Arm AGI CPU: Reshaping AI Data Center Architecture

Following the release of the 192-core AmpereOne and AmpereOne M, SoftBank-backed Ampere is expected to launch the AmpereOne MX in 2026, which will feature an increased core and thread count of 256/256.

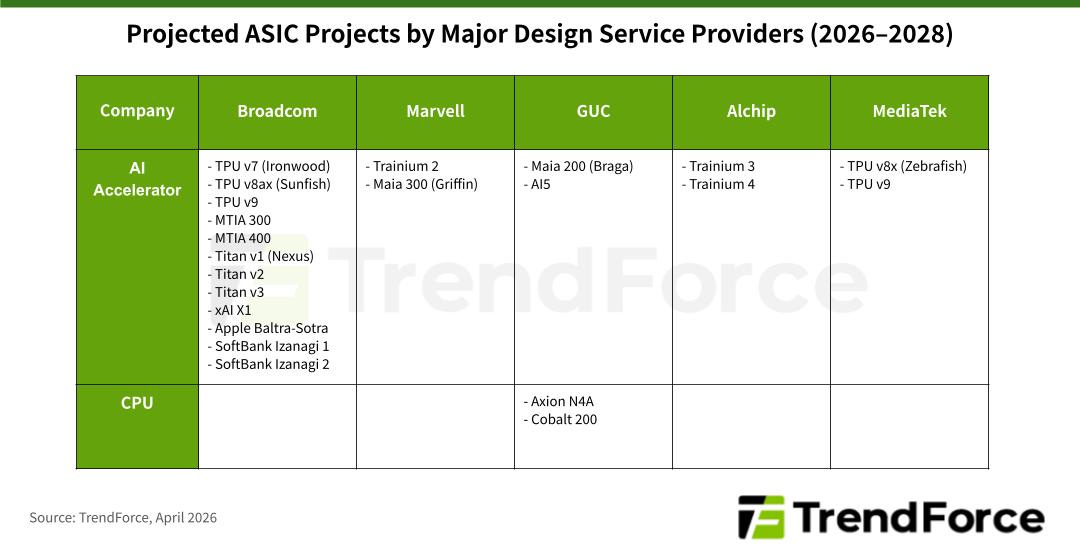

Among the major U.S. CSPs, AWS was the earliest mover, launching its first in-house Graviton CPU in 2016 using the TSMC N16 process. In December 2025, it released Graviton5 on TSMC N3 (192/192 cores/threads). By pairing these custom CPUs with its Trainium 3 AI ASIC, AWS is able to effectively lower the costs of AI computation.

Microsoft became the second CSP to develop in-house CPU, debuting the Cobalt 100 CPU alongside the Maia 100 AI ASIC in November 2023 using TSMC’s N5 process. In November 2025, the company introduced the next-generation Cobalt 200, which utilizes the N3 process and provides 132/132 cores/threads.

Google followed suit in April 2024 with the launch of its first custom CPU, the Axion C4A, based on the TSMC N5 process. For 2026, Google plans to introduce the bare-metal Axion C4A.metal (96/96 cores/threads) and the next-generation Axion N4A (64/64 cores/threads), with the strategic goal of delivering the highest price-to-performance ratio in the market.

Below we summarize the core and thread counts of major CPUs in 2026. It is observable that the Intel Xeon 6+ (Clearwater Forest) will achieve the maximum core count of 288. This is followed by the AMD EPYC Venice, Intel Xeon 7 (Diamond Rapids), and AmpereOne MX, all of which feature 256 cores. However, due to the utilization of Simultaneous Multithreading (SMT) technology, the AMD EPYC Venice can execute 512 threads across its 256 cores, reaching the highest thread count currently available.

TrendForce expects the growing trend of major CSPs developing custom CPUs to create additional business opportunities for IC back-end design service providers. Currently, while AWS maintains its design in-house, both Google and Microsoft outsource their CPU back-end design services to Global Unichip Corp. (GUC). The following table summarizes the projected projects to be secured by ASIC design service providers between 2026 and 2028.

Quick Recap

Agentic AI Deployment Drives Structural CPU Demand: GPUs have long dominated AI workloads requiring large-scale parallel computation, with CPUs playing a supporting role in memory management. As Agentic AI matures, CPUs are regaining strategic importance—handling the orchestration, tool-calling, and evaluation steps that define agentic workflows. TrendForce expects CPU:GPU ratios to shift from 1:4–1:8 toward 1:1–1:2 in Agentic AI deployments.

Intel’s in-house process yield issues may accelerate AMD’s market share gains: Intel’s planned 2026 launches—Xeon 6+ and Xeon 7, both on Intel 18A—face ongoing yield challenges that could push production into 2027. AMD’s EPYC Venice, built on TSMC N2 with CoWoS-L and SoIC packaging and scaling up to 512 threads via SMT, is well-positioned to continue taking share from Intel through 2026.

Nvidia, Arm, and major CSPs entering the CPU market is creating opportunity for IC back-end design service providers: Beyond traditional x86 vendors, a growing roster of non-traditional players—Nvidia, Arm, AWS, Google, and Microsoft—are now active in the server CPU space. TrendForce expects this trend to generate incremental demand for IC back-end design services: Google and Microsoft have already engaged GUC for CPU back-end design work, while AWS continues to develop in-house.

great sharing!